This article originally appeared on CNBC and was written by Cindy Ord for CNBC.

Do a Google search for Basecamp, a web-based project management tool company, and you might see one or more ads for competitors show up in results above the actual company.

Basecamp CEO and co-founder Jason Fried sounded off against the practice Tuesday, calling it a “shakedown” and saying it’s like ransom to have to pay up just to be seen in results.

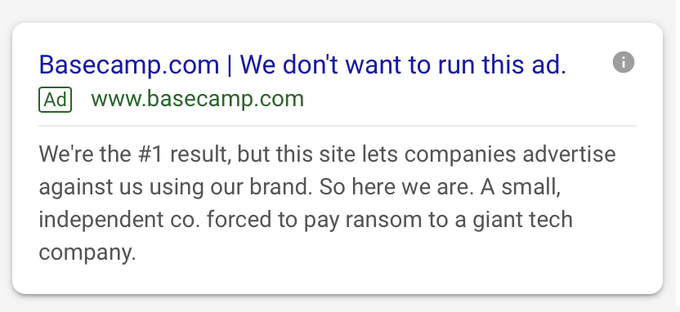

“When Google puts 4 paid ads ahead of the first organic result for your own brand name, you’re forced to pay up if you want to be found,” he tweeted Tuesday afternoon. “It’s a shakedown. It’s ransom. But at least we can have fun with it. Search for Basecamp and you may see this attached ad.”

The tweet includes the screenshot of an ad for Basecamp, reading “Basecamp.com | We don’t want to run this ad.” The copy says “We’re the #1 result, but this site lets companies advertise against us using our brand. So here we are. A small, independent co. forced to pay ransom to a giant tech company.”

Fried’s complaint comes as regulators are increasingly scrutinizing Google’s dominance in certain areas, including search and advertising. The Justice Department is reportedly looking into Google’s digital advertising and search operations as authorities prepare an antitrust review of tech giants’ market power, and more than 30 states are considering their own antitrust investigations. The company could stand to face billions of dollars in fines, as it from competition authorities in Europe, or even be forced to spin off business units like YouTube.

In an interview with CNBC, Fried said the company hadn’t previously advertised on Google but started doing so since Basecamp would sometimes show up fifth in search results under advertisements, “even though we’re the first organic result and it’s our brand.”

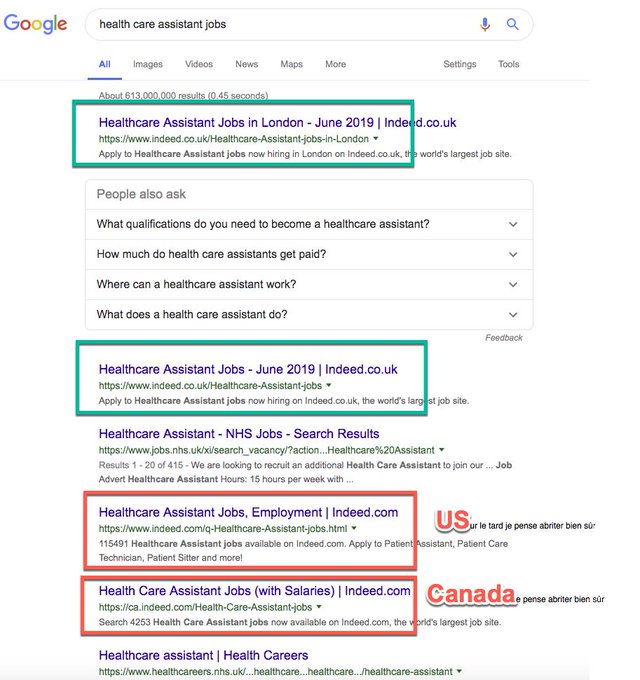

For instance, when searching for Basecamp on Google, a user might see an ad for Monday.com positioning itself as a Basecamp alternative. Monday.com didn’t immediately respond to a request for comment.

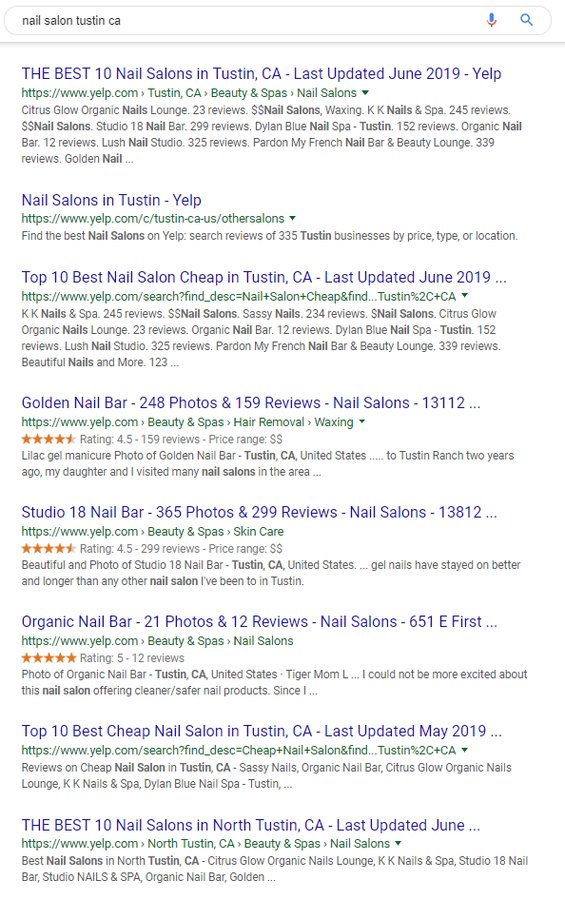

This practice, called “conquesting,” is a common way for brands to show up when potential customers search for a competitor, and is common on many different platforms other than Google — for instance, if you search for one brand on Amazon, you might see a slew of products from other brands before you find what you were searching for.

Fried also said it can be tough for the average consumer to discern whether a listing is an ad since Google’s “Ad” qualifier is so small.

“It’s so easy to miss,” he said. “The ads look more and more like organic results.’”

“It just seems completely unfair,” Fried said. “You basically have to pay protection money to Google to even have a chance.” He said the company is running the ad to stand up for small businesses having these kinds of problems with Google.

Fried also said the company has filed complaints about trademark violations with Google for ads that use Basecamp’s name, but said ads of that kind keep popping up.

In a statement, a Google spokeswoman said the company prohibits the use of trademarked terms in the text of an ad if the owner files a complaint. “Our trademark policy balances the interests of users, advertisers and trademark owners, ” the statement said. “To provide users with the most relevant ads, we don’t restrict trademarked terms as keywords. We do, however, restrict trademarked terms in ad text if the trademark owner files a complaint.”

The CEO of Shopify, Tobias Lutke, shared and weighed in on Fried’s tweet.

“It’s totally crazy for google to get away with charging what’s basically protection money on your own brand name,” he wrote. ”‘Nice high intend traffic you got there, would be a shame if something were to happen to it.’”

Other companies in recent weeks have discussed issues with Google ads. IAC said in early August it had seen an unanticipated increase in the cost of customers from Google, its largest source of traffic. The company said “users arriving through paid search results were up substantially, but considerably more expensive.”

Booking Holdings, the parent of Booking.com, Priceline and Kayak, has also long counted on Google for traffic, spending billions of dollars a year on ads and search engine optimization. But last month the company said it has “observed a long-term trend of decreasing performance marketing returns on investment (‘ROIs’), a trend we expect to continue” and had shifted some of its marketing spending from search to other means of advertising.

John

John

! Let’s make the web faster together

! Let’s make the web faster together